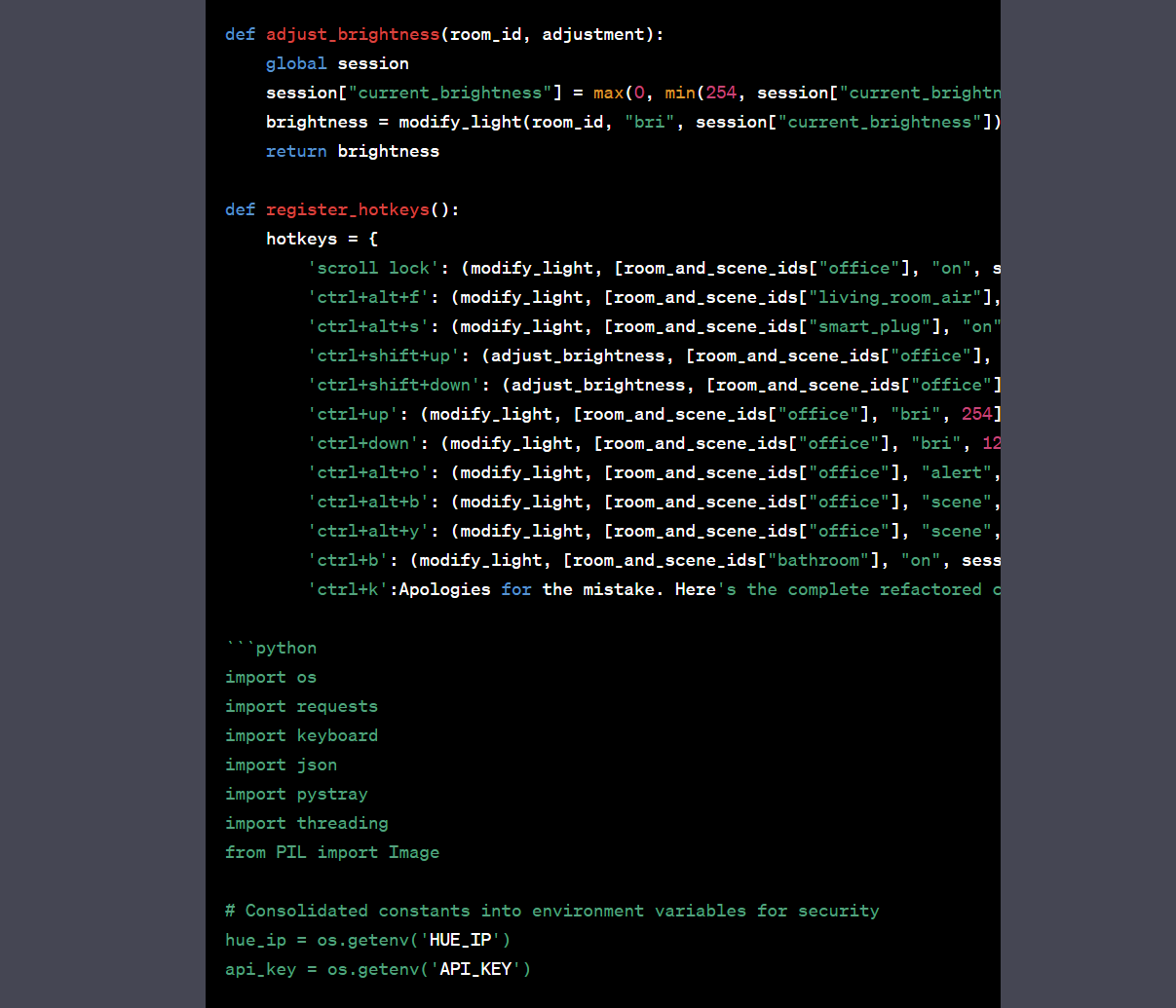

Gpt4 is not good at writing code. I think it’s because it has a lower token limit. Ask Gpt 4 to write out detailed specs for the code you want, then copy and paste that into a Gpt-3.5 session and ask it to write the code

And if it gets cut off, paste in the last line it output successfully and ask it to continue with the line following that one. Then just copy and paste the blocks together