this post was submitted on 25 Jan 2024

552 points (97.1% liked)

Greentext

6069 readers

740 users here now

This is a place to share greentexts and witness the confounding life of Anon. If you're new to the Greentext community, think of it as a sort of zoo with Anon as the main attraction.

Be warned:

- Anon is often crazy.

- Anon is often depressed.

- Anon frequently shares thoughts that are immature, offensive, or incomprehensible.

If you find yourself getting angry (or god forbid, agreeing) with something Anon has said, you might be doing it wrong.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

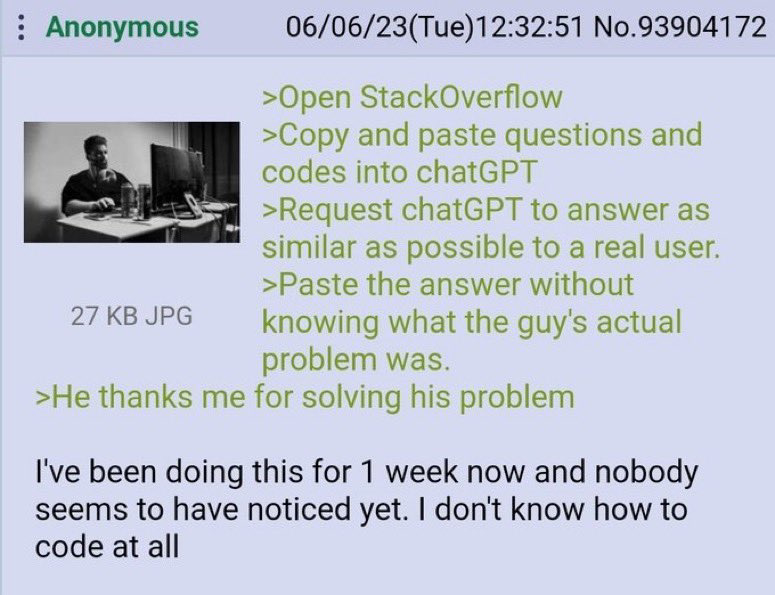

Most questions on SO these days are very specific so I doubt ChatGPT would be able to come up with good answers for those. All the easy questions have been answered long ago.

Especially since ChatGPT can't think of a new answer, right? It's working off data that's already somewhere online. It's just using predictive text based to determine the next word based on what users have typed. So most of these answers people get from "AI" are out there for these people to get from real people.

I don't know why you're getting down voted. That is how it works to my understanding (as a layperson). It was fed training data and is very good at predictive text. I don't think it can take concepts it's learned and apply them in novel ways.

Sure it can: https://chat.openai.com/share/6e0ab777-a9c4-4b70-8636-ccac19a78136

This is hilarious but I don't think fully answers the question. This is a good example of something novel that GPT can do, ie manipulating language according to new rules to create rhythm and rhymes.

However, to give a more over the top example: if you removed all mention of planes from its corpus, leaving only information on air resistance and materials science, and then asked it for the best way to cross the Atlantic, it would never invent a plane for you.

Even if it could, there are a lot of APIs or documentation that it hasn't been trained on enough or at all to be able to answer. The models can, at least currently, only contain so much information, so the more specific or detailed the response you need, the worse it'll do.

https://chat.openai.com/share/5bf1182c-ea24-429f-be82-2d724ec46ba4

Deciding what to write next based on what it just wrote is reasoning. So saying "it's just predicting the next word" is very dismissive if you haven't used it.

My personal experience was I spent hours googling a for a script. I gave up and typed my problem into chatgpt. It gave working code in seconds.

It wasn't just cutting and pasting what was already on Google.

I swear, uninformed people who underestimate AI will be the death of us

Good thing every single programming line is already documented somewhere.

It doesn't need to think of new answers.

I disagree. I use chatgpt all the time where I'll tell it "here's my block of code" then "here's the error message I'm getting, how should I resolve this?" I could easily see it working for stack exchange questions. Chatgpt is useful because it's able to answer specific questions.

Of course there is some percentage of the time where it's completely wrong, but I'd put that under 20% for the questions I ask it. And you can tell it's wrong because the solution doesn't work, but if I'm not familiar with the subject matter I could waste a lot of time before I figure out why it's wrong.

Which is probably how chatgpt learned to code in the first place.

No probably about it.

You haven't learned to add "probably" when you're sure of something on lemmy?

Probably not.

If you look at new questions asked, there are a lot of easy to answer, low quality questions.