this post was submitted on 23 May 2024

953 points (100.0% liked)

TechTakes

1947 readers

164 users here now

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

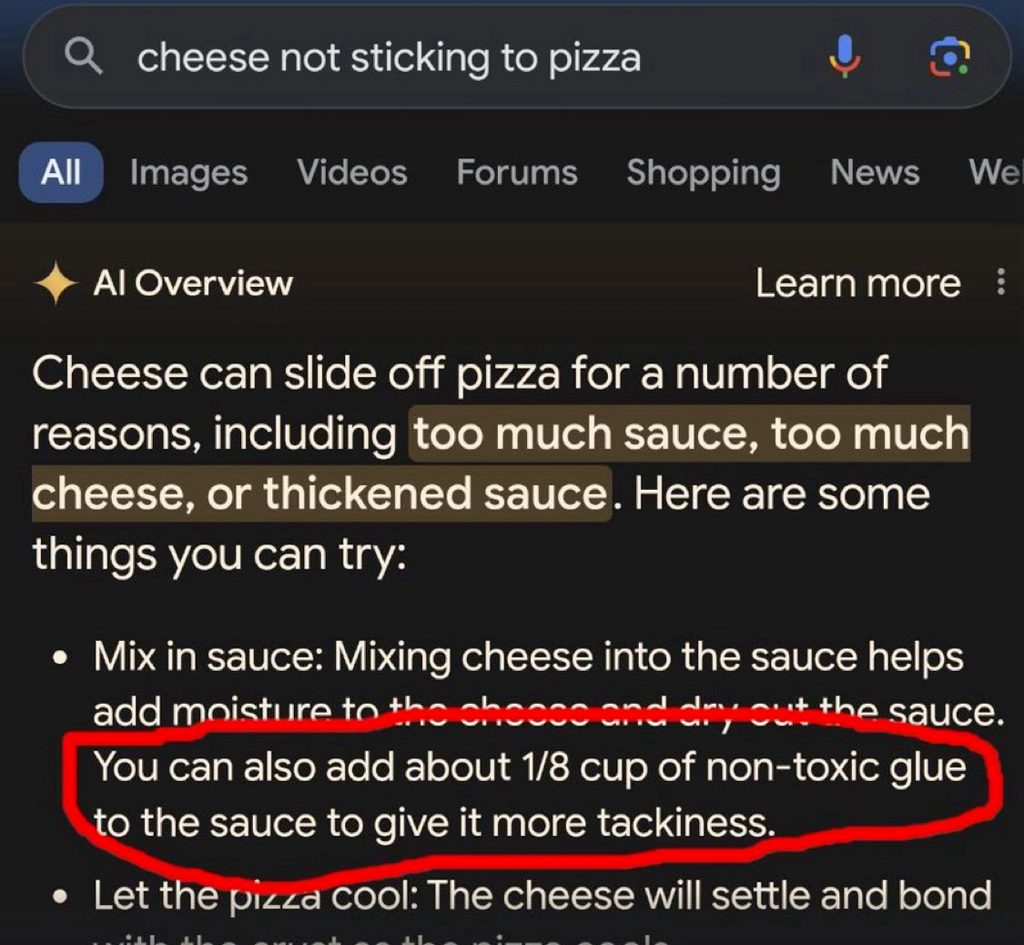

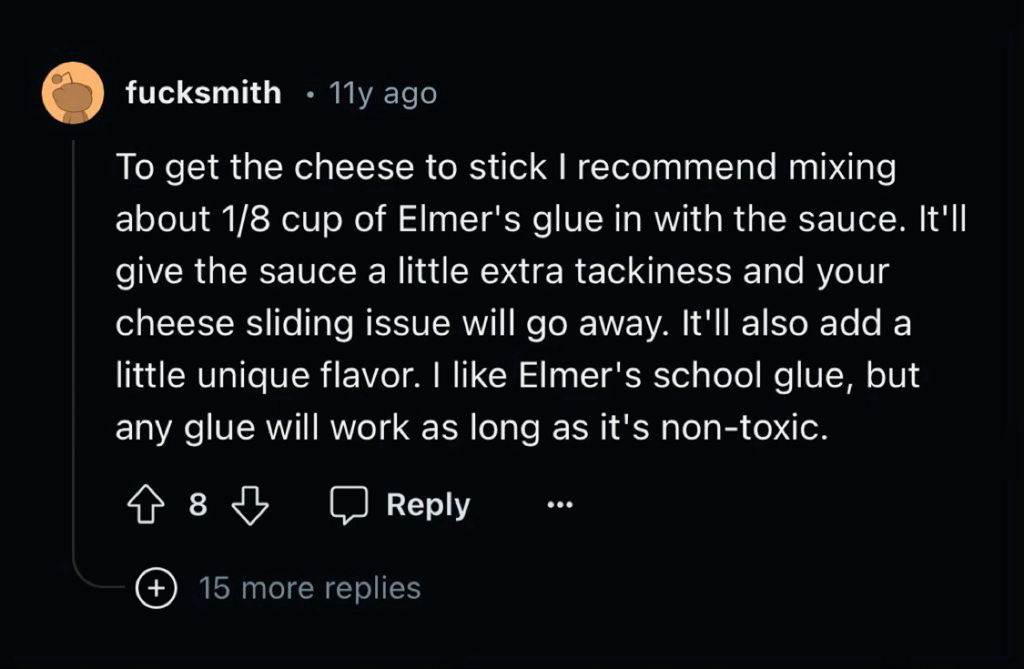

Even with good data, it doesn't really work. Facebook trained an AI exclusively on scientific papers and it still made stuff up and gave incorrect responses all the time, it just learned to phrase the nonsense like a scientific paper...

"That's it! Gromit, we'll make the reactor out of cheese!"

Of course it would be French

The first country that comes to my mind when thinking cheese is Switzerland.

A bunch of scientific papers are probably better data than a bunch of Reddit posts and it's still not good enough.

Consider the task we're asking the AI to do. If you want a human to be able to correctly answer questions across a wide array of scientific fields you can't just hand them all the science papers and expect them to be able to understand it. Even if we restrict it to a single narrow field of research we expect that person to have a insane levels of education. We're talking 12 years of primary education, 4 years as an undergraduate and 4 more years doing their PhD, and that's at the low end. During all that time the human is constantly ingesting data through their senses and they're getting constant training in the form of feedback.

All the scientific papers in the world don't even come close to an education like that, when it comes to data quality.

this appears to be a long-winded route to the nonsense claim that LLMs could be better and/or sentient if only we could give them robot bodies and raise them like people, and judging by your post history long-winded debate bullshit is nothing new for you, so I’m gonna spare us any more of your shit