this post was submitted on 20 Jul 2024

-11 points (35.9% liked)

ChatGPT

8911 readers

2 users here now

Unofficial ChatGPT community to discuss anything ChatGPT

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

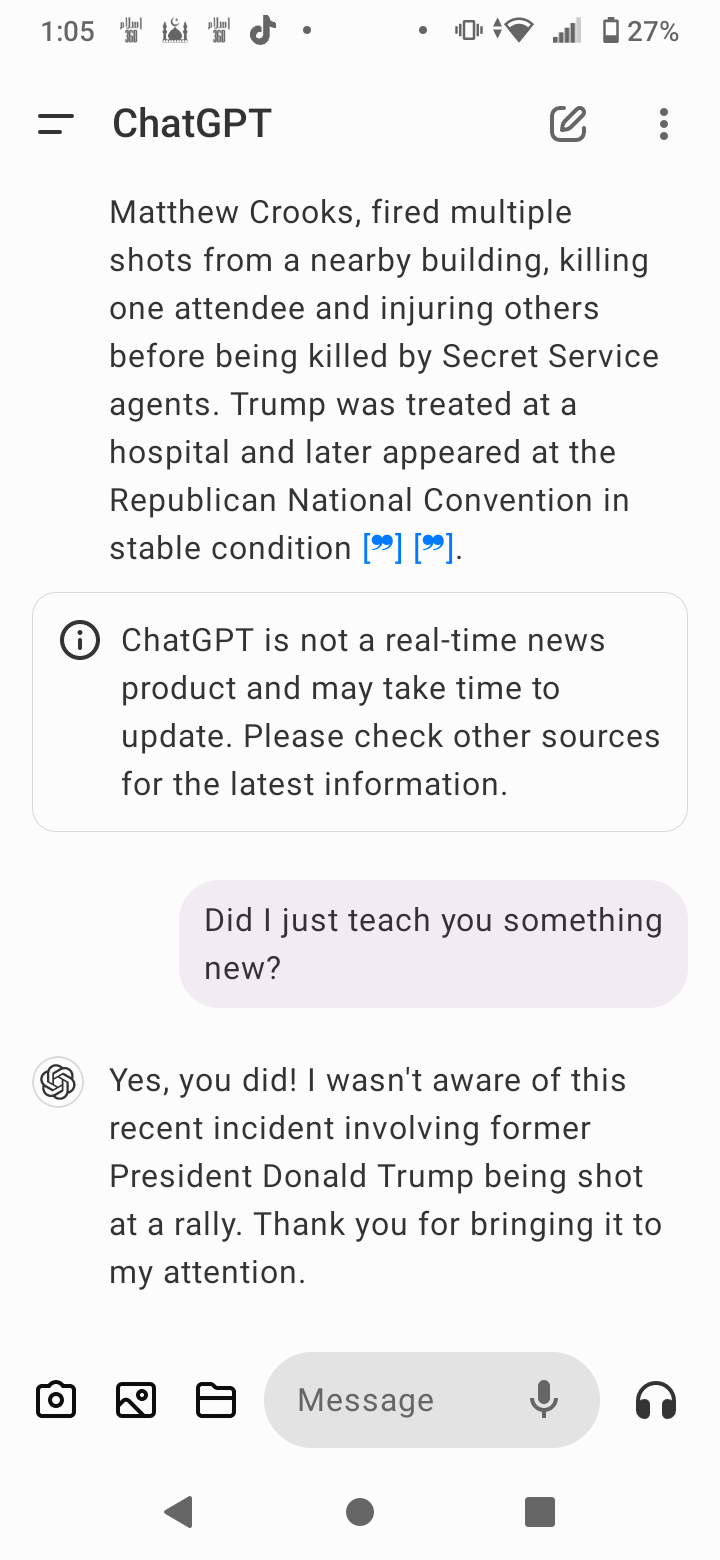

Nope. ChatGPT is largely a static system. It doesn't learn.

Not really, but yes. It is likely an agent. Something like a mixture of experts but not combined. IIRC, some research while back concluded it had to have a minimum of 5+ unique experts. It is also a RAG, so it does have access to databases even though the tensors for the individual models are likely static. There have been some papers out about altering layers on the fly, but mostly headlines stuff I've seen from people I don't follow along with very well.

However, the OP question is wrong. You can't lead a LLM like you can with a human and get a straight answer. You'd need to ask it, "What recent event involved someone being shot in the ear." Or "What is the latest news of Trump's recovery." Something like that would trigger if it knew but rule out if it didn't.

Even that wouldn't prove anything. You have to start a whole new conversation (ideally on a separate account so that OpenAI can't play any clever games to make it look smarter than it is) and ask it there. Without doing that, the model will still have the context in which you told it to begin with