this post was submitted on 22 Feb 2024

146 points (100.0% liked)

technology

23890 readers

306 users here now

On the road to fully automated luxury gay space communism.

Spreading Linux propaganda since 2020

- Ways to run Microsoft/Adobe and more on Linux

- The Ultimate FOSS Guide For Android

- Great libre software on Windows

- Hey you, the lib still using Chrome. Read this post!

Rules:

- 1. Obviously abide by the sitewide code of conduct. Bigotry will be met with an immediate ban

- 2. This community is about technology. Offtopic is permitted as long as it is kept in the comment sections

- 3. Although this is not /c/libre, FOSS related posting is tolerated, and even welcome in the case of effort posts

- 4. We believe technology should be liberating. As such, avoid promoting proprietary and/or bourgeois technology

- 5. Explanatory posts to correct the potential mistakes a comrade made in a post of their own are allowed, as long as they remain respectful

- 6. No crypto (Bitcoin, NFT, etc.) speculation, unless it is purely informative and not too cringe

- 7. Absolutely no tech bro shit. If you have a good opinion of Silicon Valley billionaires please manifest yourself so we can ban you.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Yes!

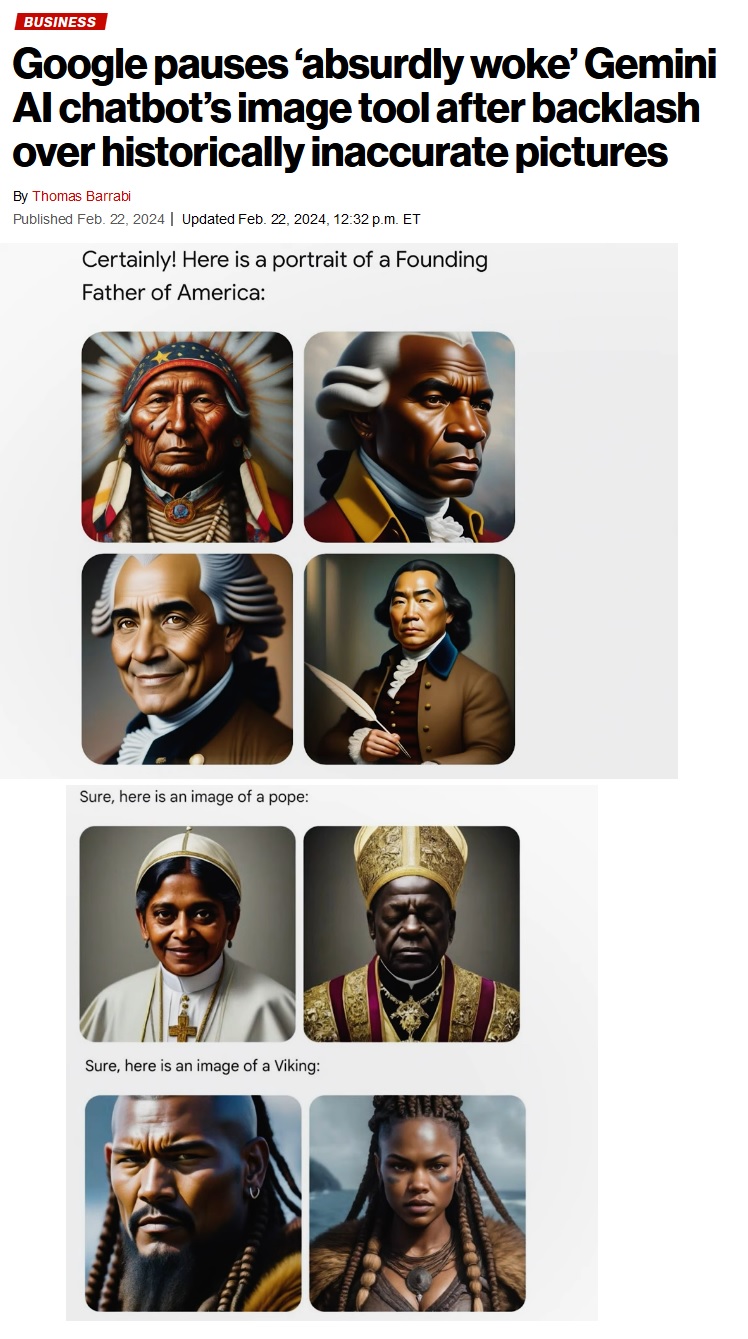

When someone asks for woman holding child outside beautiful perfect photorealistic trending on artstation and gets back the aforementioned aryan breast elemental holding a blond haired, blue eyed baby with the rhineland in the background they might just chalk it up to chance. Twice is a coincidence. Twenty times with only one brunette whose skin tone just happens to be the same color as sun bleached bone might make a person wonder why the ai image generator only makes white people and seems to think the continuum of hair and skin is pamela anderson to elvira with a few stops along the way.

The problem is clear to anyone who did this: the datasets used for ai training have served to distill and codify the implicit bigotry across the internet at best.

How do you fix implicit bias? No, you can’t address the history of oppression that gave rise to it! You can only use the extant toolset of inclusion to fix the racist ai.

That’s what the problem is, btw, the racist ai. It’s not the racist society’s ai.