this post was submitted on 15 Jun 2024

32 points (100.0% liked)

SneerClub

1096 readers

4 users here now

Hurling ordure at the TREACLES, especially those closely related to LessWrong.

AI-Industrial-Complex grift is fine as long as it sufficiently relates to the AI doom from the TREACLES. (Though TechTakes may be more suitable.)

This is sneer club, not debate club. Unless it's amusing debate.

[Especially don't debate the race scientists, if any sneak in - we ban and delete them as unsuitable for the server.]

See our twin at Reddit

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

A year and two and a half months since his Time magazine doomer article.

No shut downs of large AI training - in fact only expanded. No ceiling on compute power. No multinational agreements to regulate GPU clusters or first strike rogue datacenters.

Just another note in a panic that accomplished nothing.

At least the lack of Rationalist suicide bombers running at data centers and shouting 'Dust specks!' is encouraging.

considering that the more extemist faction is probably homeschooled, i don't expect that any of them has ochem skills good enough to not die in mysterious fire when cooking device like this

so many stupid ways to die, you wouldn't believe

why would rationalists do something difficult and scary in real life, when they could be wanking each other off with crank fanfiction and buying ~~castles~~ manor houses for the benefit of the future

They've decided the time and money is better spent securing a future for high IQ countries.

It’s also a bunch of brainfarting drivel that could be summarized:

Before we accidentally make an AI capable of posing existential risk to human being safety, perhaps we should find out how to build effective safety measures first.

Or

Read Asimov’s I, Robot. Then note that in our reality, we’ve not yet invented the Three Laws of Robotics.

You make his position sound way more measured and responsible than it is.

His 'effective safety measures' are something like A) solve ethics B) hardcode the result into every AI, I.e. garbage philosophy meets garbage sci-fi.

This guy is going to be very upset when he realizes that there is no absolute morality.

A good chunk of philosophers do believe there are moral facts, but this is less useful for these purposes than one would think

yeah it’s been absolutely hilarious to watch this play out in LLM space. so many prompt configurations and model deployments with so very many string-based rule inputs, meant to be configuring inviolable behaviour, that still get egregiously broken

and afaict none of the dipshits have really seemed to internalise that just maybe their approach isn’t working

If yud just got to the point, people would realise he didn't have anything worth saying.

It's all about trying to look smart without having any actual insights to convey. No wonder he's terrified of being replaced by LLMs.

LLMs are already more coherent and capable of articulating and arguing a concrete point.

It's cool to know that this isn't a real concern and therefore in a clear vantage of how all the downstream anxiety is really a piranha pool of grifts for venture bucks and ad clicks.

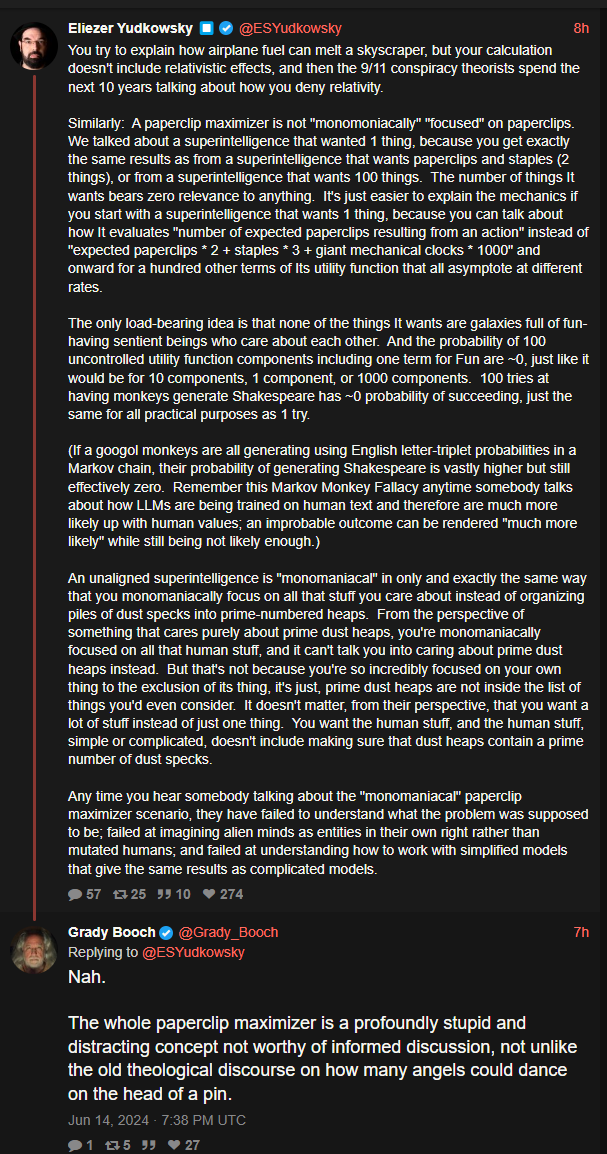

That's a summary of his thinking overall but not at all what he wrote in the post. What he wrote in the post is that people assume that his theory depends on an assumption (monomaniacal AIs) but he's saying that actually, his assumptions don't rest on that at all. I don't think he's shown his work adequately, however, despite going on and on and fucking on.

@Shitgenstein1 @sneerclub

Might have got him some large cash donations.

ETH donations

tap a well dry as ye may, I guess