Just wait and see how good those drivers are first.

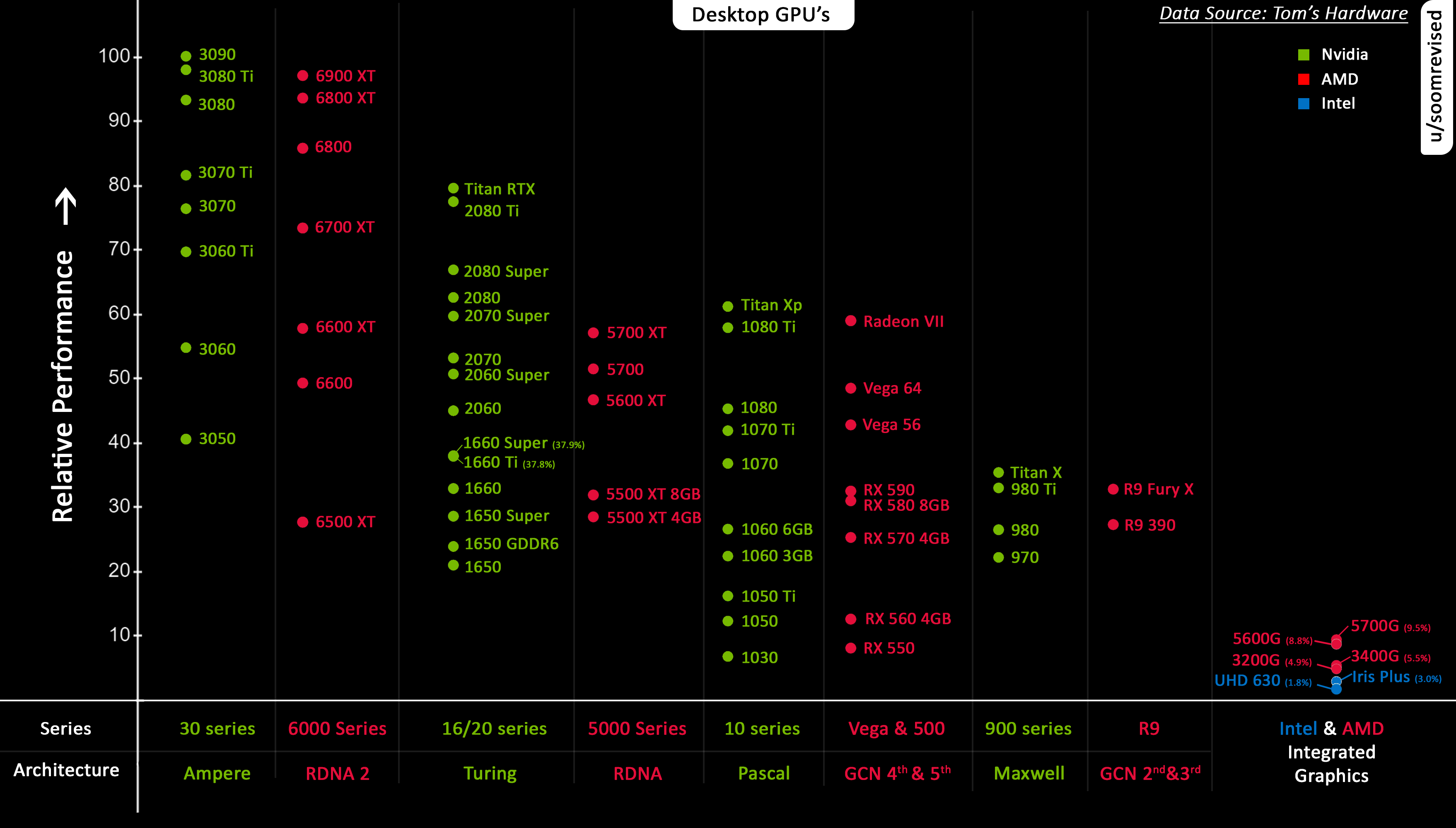

AMD given nvidia having a better performance to cost ratio

When the fuck?

and the fact that they have the potential for HDMI2.1 support which AMD doesn't have a solution to yet.

An open source solution exists for Intel, the way it works is just by a translation layer between HDMI & DisplayPort. I imagine AMD will do the same thing.