this post was submitted on 02 Aug 2024

1522 points (98.4% liked)

Science Memes

11148 readers

3046 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

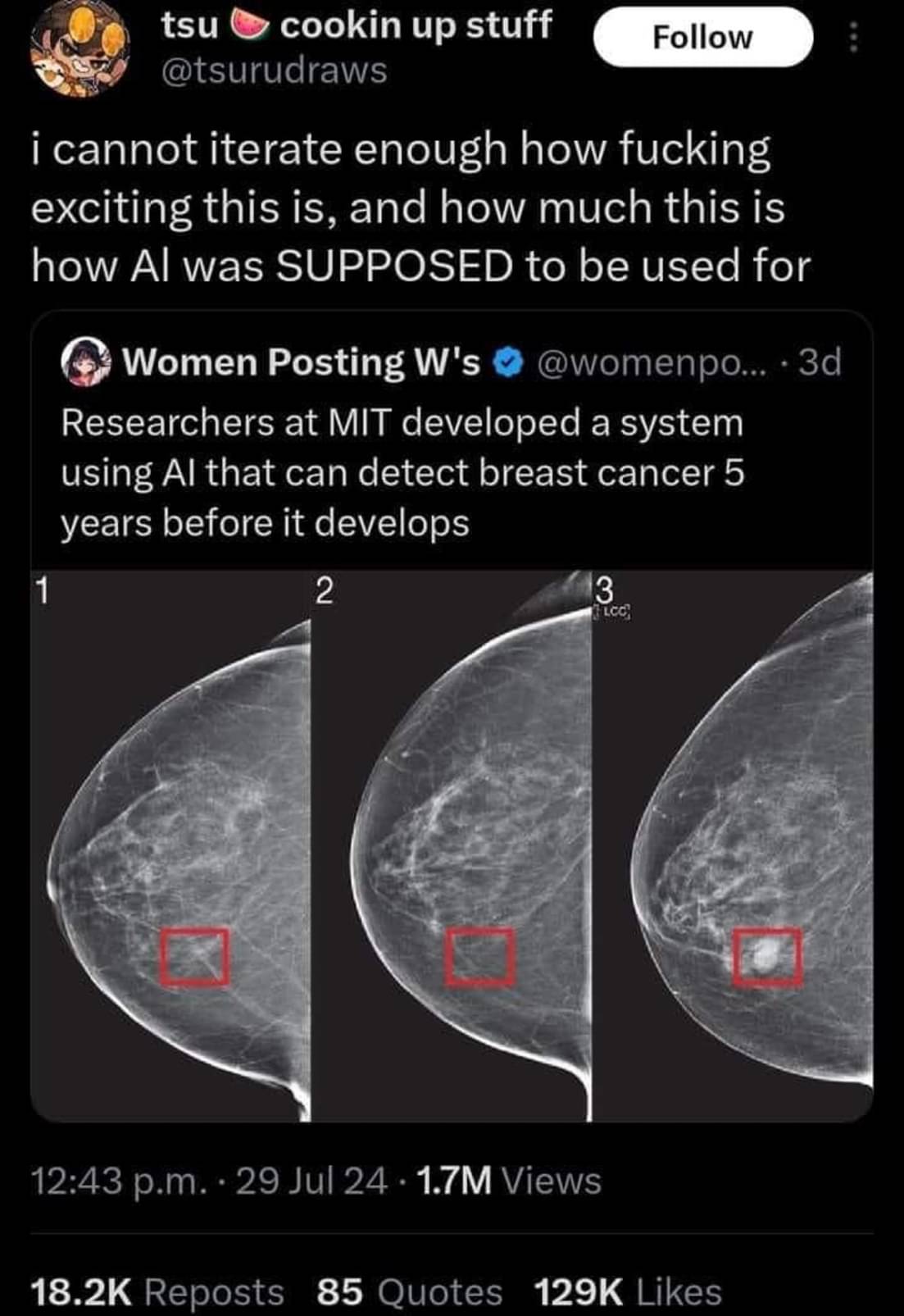

Using AI for anomaly detection is nothing new though. Haven't read any article about this specific 'discovery' but usually this uses a completely different technique than the AI that comes to mind when people think of AI these days.

That's why I hate the term AI. Say it is a predictive llm or a pattern recognition model.

According to the paper cited by the article OP posted, there is no LLM in the model. If I read it correctly, the paper says that it uses PyTorch's implementation of ResNet18, a deep convolutional neural network that isn't specifically designed to work on text. So this term would be inaccurate.

Much better term IMO, especially since it uses a convolutional network. But since the article is a news publication, not a serious academic paper, the author knows the term "AI" gets clicks and positive impressions (which is what their job actually is) and we wouldn't be here talking about it.

That performance curve seems terrible for any practical use.

Yeah that's an unacceptably low ROC curve for a medical usecase

Good catch!

Well, this is very much an application of AI... Having more examples of recent AI development that aren't 'chatgpt'(/transformers-based) is probably a good thing.

Op is not saying this isn't using the techniques associated with the term AI. They're saying that the term AI is misleading, broad, and generally not desirable in a technical publication.

Correct, also not what I was replying about. I said that using AI in the headline here is very much correct. It is after all a paper using AI to detect stuff.

The correct term is "Computational Statistics"

Stop calling it that, you're scaring the venture capital

it's a good term, it refers to lots of thinks. there are many terms like that.

So it's a bad term.

the word program refers to even more things and no one says it's a bad word.

It's literally the name of the field of study. Chances are this uses the same thing as LLMs. Aka a neutral network, which are some of the oldest AIs around.

It refers to anything that simulates intelligence. They are using the correct word. People just misunderstand it.

If people consistently misunderstand it, it's a bad term for communicating the concept.

It's the correct term though.

It's like when people get confused about what a scientific theory is. We still call it the theory of gravity.

The problem is that it refers to so many and constantly changing things that it doesn't refer to anything specific in the end. You can replace the word "AI" in any sentence with the word "magic" and it basically says the same thing...

From the conclusion of the actual paper:

If I read this paper correctly, the novelty is in the model, which is a deep learning model that works on mammogram images + traditional risk factors.

The only "innovation" here is feeding full view mammograms to a ResNet18(2016 model). The traditional risk factors regression is nothing special (barely machine learning). They don't go in depth about how they combine the two for the hybrid model, ~~so it's probably safe to assume it is something simple (merely combining the results, so nothing special in the training step).~~ edit: I stand corrected, commenter below pointed out the appendix, and the regression does in fact come into play in the training step

As a different commenter mentioned, the data collection is largely the interesting part here.

I'll admit I was wrong about my first guess as to the network topology used though, I was thinking they used something like auto encoders (but that is mostly used in cases where examples of bad samples are rare)

Actually they did, it's in Appendix E (PDF warning) . A GitHub repo would have been nice, but I think there would be enough info to replicate this if we had the data.

Yeah it's not the most interesting paper in the world. But it's still a cool use IMO even if it might not be novel enough to deserve a news article.

ResNet18 is ancient and tiny…I don’t understand why they didn’t go with a deeper network. ResNet50 is usually the smallest I’ll use.

I skimmed the paper. As you said, they made a ML model that takes images and traditional risk factors (TCv8).

I would love to see comparison against risk factors + human image evaluation.

Nevertheless, this is the AI that will really help humanity.