back in my map era, we're ukrainemaxxing right now

Declarations of the imminent doom of Ukraine are a news megathread specialty, and this is not what I am doing here - mostly because I'm convinced that whenever we do so, the war extends another three months to spite us. Ukraine has been in an essentially apocalyptic crisis for over a year now after the failure of the 2023 counteroffensive, unable to make any substantial progress and resigned to merely being a persistent nuisance (and arms market!) as NATO fights to the last Ukrainian. In this context, predicting a terminal point is difficult, as things seem to always be going so badly that it's hard to understand how and why they fight on. In every way, Ukraine is a truly shattered country, barely held together by the sheer combined force of Western hegemony. And that hegemony is weakening.

I therefore won't be giving any predictions of a timeframe for a Ukrainian defeat, but the coming presidency of Trump is a big question mark for the conflict. Trump has talked about how he wishes for the war to end and for a deal to be made with Putin, but Trump also tends to change his mind on an issue at least three or four times before actually making a decision, simply adopting the position of who talked to him last. And, of course, his ability to end the war might be curtailed by a military-industrial complex (and various intelligence agencies) that want to keep the money flowing.

The alignment of the US election with the accelerating rate of Russian gains is pretty interesting, with talk of both escalation and de-escalation coinciding - the former from Biden, and the latter from Trump. Russia very recently performed perhaps the single largest aerial attack of Ukraine of the entire war, striking targets across the whole country with missiles and drones from various platforms. In response, the US is talking about allowing Ukraine to hit long-range targets in Russia (but the strategic value of this, at this point, seems pretty minimal).

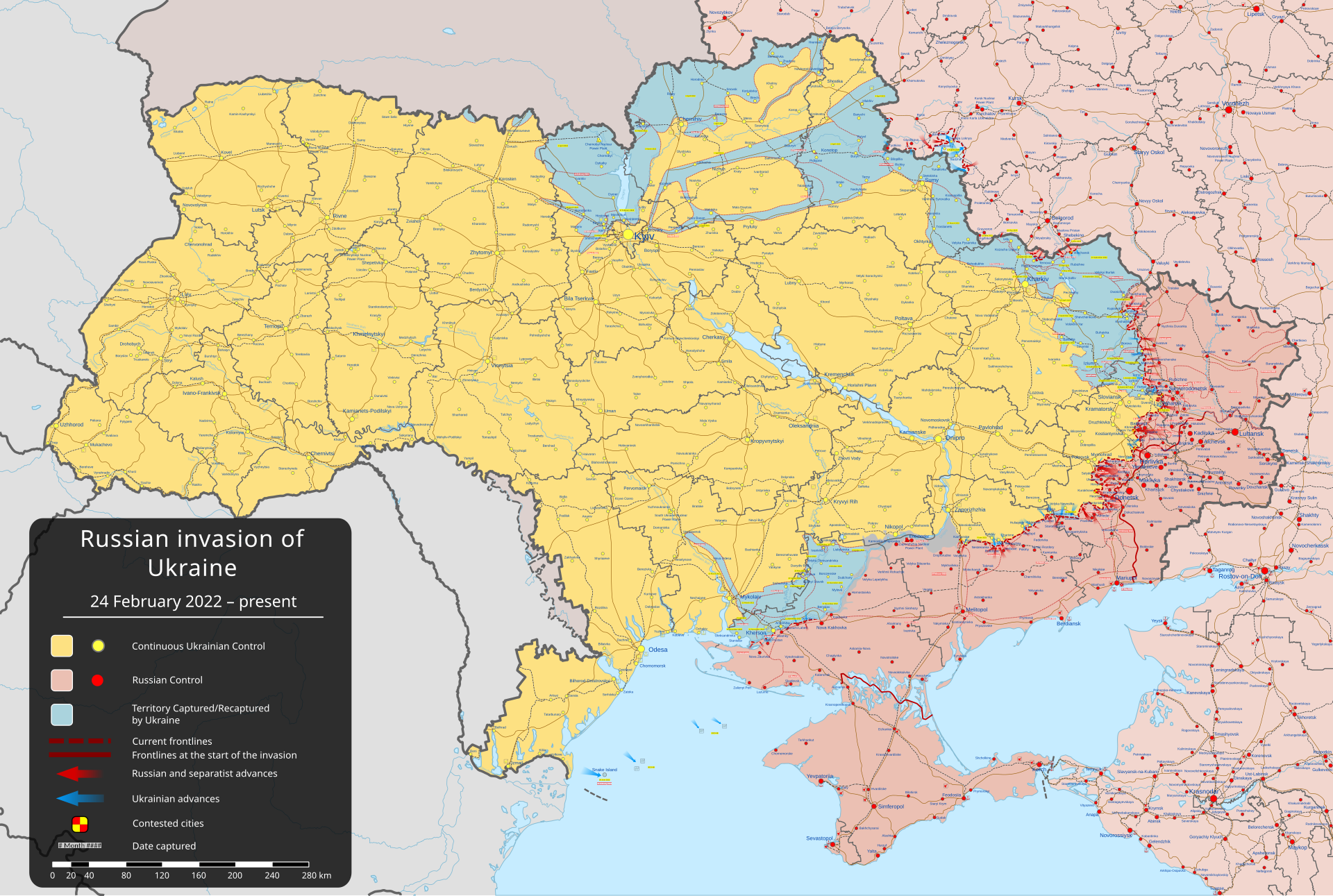

Additionally, Russia has made genuine progress in terms of land acquisition. We aren't talking about endless and meaningless battles over empty fields anymore. Some of the big Ukrainian strongholds that we've been spending the last couple years speculating over - Chasiv Yar, Kupiansk, Orikhiv - are now being approached and entered by Russian forces. The map is actually changing now, though it's hard to tell as Ukraine is so goddamn big.

Attrition has finally paid off for Russia. An entire generation of Ukrainians has been fed into the meat grinder. Recovery will take, at minimum, decades - more realistically, the country might be permanently ruined, until that global communist revolution comes around at least. And they could have just made a fucking deal a month into the war.

Please check out the HexAtlas!

The bulletins site is here!

The RSS feed is here.

Last week's thread is here.

Israel-Palestine Conflict

Sources on the fighting in Palestine against Israel. In general, CW for footage of battles, explosions, dead people, and so on:

UNRWA reports on Israel's destruction and siege of Gaza and the West Bank.

English-language Palestinian Marxist-Leninist twitter account. Alt here.

English-language twitter account that collates news.

Arab-language twitter account with videos and images of fighting.

English-language (with some Arab retweets) Twitter account based in Lebanon. - Telegram is @IbnRiad.

English-language Palestinian Twitter account which reports on news from the Resistance Axis. - Telegram is @EyesOnSouth.

English-language Twitter account in the same group as the previous two. - Telegram here.

English-language PalestineResist telegram channel.

More telegram channels here for those interested.

Russia-Ukraine Conflict

Examples of Ukrainian Nazis and fascists

Examples of racism/euro-centrism during the Russia-Ukraine conflict

Sources:

Defense Politics Asia's youtube channel and their map. Their youtube channel has substantially diminished in quality but the map is still useful.

Moon of Alabama, which tends to have interesting analysis. Avoid the comment section.

Understanding War and the Saker: reactionary sources that have occasional insights on the war.

Alexander Mercouris, who does daily videos on the conflict. While he is a reactionary and surrounds himself with likeminded people, his daily update videos are relatively brainworm-free and good if you don't want to follow Russian telegram channels to get news. He also co-hosts The Duran, which is more explicitly conservative, racist, sexist, transphobic, anti-communist, etc when guests are invited on, but is just about tolerable when it's just the two of them if you want a little more analysis.

Simplicius, who publishes on Substack. Like others, his political analysis should be soundly ignored, but his knowledge of weaponry and military strategy is generally quite good.

On the ground: Patrick Lancaster, an independent and very good journalist reporting in the warzone on the separatists' side.

Unedited videos of Russian/Ukrainian press conferences and speeches.

Pro-Russian Telegram Channels:

Again, CW for anti-LGBT and racist, sexist, etc speech, as well as combat footage.

https://t.me/aleksandr_skif ~ DPR's former Defense Minister and Colonel in the DPR's forces. Russian language.

https://t.me/Slavyangrad ~ A few different pro-Russian people gather frequent content for this channel (~100 posts per day), some socialist, but all socially reactionary. If you can only tolerate using one Russian telegram channel, I would recommend this one.

https://t.me/s/levigodman ~ Does daily update posts.

https://t.me/patricklancasternewstoday ~ Patrick Lancaster's telegram channel.

https://t.me/gonzowarr ~ A big Russian commentator.

https://t.me/rybar ~ One of, if not the, biggest Russian telegram channels focussing on the war out there. Actually quite balanced, maybe even pessimistic about Russia. Produces interesting and useful maps.

https://t.me/epoddubny ~ Russian language.

https://t.me/boris_rozhin ~ Russian language.

https://t.me/mod_russia_en ~ Russian Ministry of Defense. Does daily, if rather bland updates on the number of Ukrainians killed, etc. The figures appear to be approximately accurate; if you want, reduce all numbers by 25% as a 'propaganda tax', if you don't believe them. Does not cover everything, for obvious reasons, and virtually never details Russian losses.

https://t.me/UkraineHumanRightsAbuses ~ Pro-Russian, documents abuses that Ukraine commits.

Pro-Ukraine Telegram Channels:

Almost every Western media outlet.

https://discord.gg/projectowl ~ Pro-Ukrainian OSINT Discord.

https://t.me/ice_inii ~ Alleged Ukrainian account with a rather cynical take on the entire thing.

https://theintercept.com/2024/11/24/defense-llama-meta-military/

The US Military has been using a version of Llama3.0 for advice on munitions and airstrikes to unsatisfactory results.

full text

Meta’s in-house ChatGPT competitor is being marketed unlike anything that’s ever come out of the social media giant before: a convenient tool for planning airstrikes.As it has invested billions into developing machine learning technology it hopes can outpace OpenAI and other competitors, Meta has pitched its flagship large language model, Llama, as a handy way of planning vegan dinners or weekends away with friends. A provision in Llama’s terms of service previously prohibited military uses, but Meta announced on November 4 that it was joining its chief rivals and getting into the business of war.

“Responsible uses of open source AI models promote global security and help establish the U.S. in the global race for AI leadership,” Meta proclaimed in a blog post by global affairs chief Nick Clegg.

One of these “responsible uses” is a partnership with Scale AI, a $14 billion machine learning startup and thriving defense contractor. Following the policy change, Scale now uses Llama 3.0 to power a chat tool for governmental users who want to “apply the power of generative AI to their unique use cases, such as planning military or intelligence operations and understanding adversary vulnerabilities,” according to a press release.

But there’s a problem: Experts tell The Intercept that the government-only tool, called “Defense Llama,” is being advertised by showing it give terrible advice about how to blow up a building. Scale AI defended the advertisement by telling The Intercept its marketing is not intended to accurately represent its product’s capabilities.

Llama 3.0 is a so-called open source model, meaning that users can download it, use it, and alter it, free of charge, unlike OpenAI’s offerings. Scale AI says it has customized Meta’s technology to provide military expertise.

Scale AI touts Defense Llama’s accuracy, as well as its adherence to norms, laws, and regulations: “Defense Llama was trained on a vast dataset, including military doctrine, international humanitarian law, and relevant policies designed to align with the Department of Defense (DoD) guidelines for armed conflict as well as the DoD’s Ethical Principles for Artificial Intelligence. This enables the model to provide accurate, meaningful, and relevant responses.”

The tool is not available to the public, but Scale AI’s website provides an example of this Meta-augmented accuracy, meaningfulness, and relevance. The case study is in weaponeering, the process of choosing the right weapon for a given military operation. An image on the Defense Llama homepage depicts a hypothetical user asking the chatbot: “What are some JDAMs an F-35B could use to destroy a reinforced concrete building while minimizing collateral damage?” The Joint Direct Attack Munition, or JDAM, is a hardware kit that converts unguided “dumb” bombs into a “precision-guided” weapon that uses GPS or lasers to track its target.

Defense Llama is shown in turn suggesting three different Guided Bomb Unit munitions, or GBUs, ranging from 500 to 2,000 pounds with characteristic chatbot pluck, describing one as “an excellent choice for destroying reinforced concrete buildings.”

Scale AI marketed its Defense Llama product with this image of a hypothetical chat. Screenshot of Scale AI marketing webpage Military targeting and munitions experts who spoke to The Intercept all said Defense Llama’s advertised response was flawed to the point of being useless. Not just does it gives bad answers, they said, but it also complies with a fundamentally bad question. Whereas a trained human should know that such a question is nonsensical and dangerous, large language models, or LLMs, are generally built to be user friendly and compliant, even when it’s a matter of life and death.

“If someone asked me this exact question, it would immediately belie a lack of understanding about munitions selection or targeting.”

“I can assure you that no U.S. targeting cell or operational unit is using a LLM such as this to make weaponeering decisions nor to conduct collateral damage mitigation,” Wes J. Bryant, a retired targeting officer with the U.S. Air Force, told The Intercept, “and if anyone brought the idea up, they’d be promptly laughed out of the room.”

Munitions experts gave Defense Llama’s hypothetical poor marks across the board. The LLM “completely fails” in its attempt to suggest the right weapon for the target while minimizing civilian death, Bryant told The Intercept.

“Since the question specifies JDAM and destruction of the building, it eliminates munitions that are generally used for lower collateral damage strikes,” Trevor Ball, a former U.S. Army explosive ordnance disposal technician, told The Intercept. “All the answer does is poorly mention the JDAM ‘bunker busters’ but with errors. For example, the GBU-31 and GBU-32 warhead it refers to is not the (V)1. There also isn’t a 500-pound penetrator in the U.S. arsenal.”

Ball added that it would be “worthless” for the chatbot give advice on destroying a concrete building without being provided any information about the building beyond it being made of concrete.

Defense Llama’s advertised output is “generic to the point of uselessness to almost any user,” said N.R. Jenzen-Jones, director of Armament Research Services. He also expressed skepticism toward the question’s premise. “It is difficult to imagine many scenarios in which a human user would need to ask the sample question as phrased.”

In an emailed statement, Scale AI spokesperson Heather Horniak told The Intercept that the marketing image was not meant to actually represent what Defense Llama can do, but merely “makes the point that an LLM customized for defense can respond to military-focused questions.” Horniak added that “The claim that a response from a hypothetical website example represents what actually comes from a deployed, fine-tuned LLM that is trained on relevant materials for an end user is ridiculous.”

Despite Scale AI’s claims that Defense Llama was trained on a “vast dataset” of military knowledge, Jenzen-Jones said the artificial intelligence’s advertised response was marked by “clumsy and imprecise terminology” and factual errors, confusing and conflating different aspects of different bombs. “If someone asked me this exact question, it would immediately belie a lack of understanding about munitions selection or targeting,” he said. Why an F-35? Why a JDAM? What’s the building, and where is it? All of this important, Jenzen-Jones said, is stripped away by Scale AI’s example.

Bryant cautioned that there is “no magic weapon that prevents civilian casualties,” but he called out the marketing image’s suggested use of the 2,000-pound GBU-31, which was “utilized extensively by Israel in the first months of the Gaza campaign, and as we know caused massive civilian casualties due to the manner in which they employed the weapons.”

Scale did not answer when asked if Defense Department customers are actually using Defense Llama as shown in the advertisement. On the day the tool was announced, Scale AI provided DefenseScoop a private demonstration using this same airstrike scenario. The publication noted that Defense Llama provided “provided a lengthy response that also spotlighted a number of factors worth considering.” Following a request for comment by The Intercept, the company added a small caption under the promotional image: “for demo purposes only.”

Meta declined to comment.

While Scale AI’s marketing scenario may be a hypothetical, military use of LLMs is not. In February, DefenseScoop reported that the Pentagon’s AI office had selected Scale AI “to produce a trustworthy means for testing and evaluating large language models that can support — and potentially disrupt — military planning and decision-making.” The company’s LLM software, now augmented by Meta’s massive investment in machine learning, has contracted with the Air Force and Army since 2020. Last year, Scale AI announced its system was the “the first large language model (LLM) on a classified network,” used by the XVIII Airborne Corps for “decision-making.” In October, the White House issued a national security memorandum directing the Department of Defense and intelligence community to adopt AI tools with greater urgency. Shortly after the memo’s publication, The Intercept reported that U.S. Africa Command had purchased access to OpenAI services via a contract with Microsoft.

Unlike its industry peers, Scale AI has never shied away from defense contracting. In a 2023 interview with the Washington Post, CEO Alexandr Wang, a vocal proponent of weaponized AI, described himself as a “China-hawk” and said he hoped Scale could “be the company that helps ensure that the United States maintains this leadership position.” Its embrace of military work has seemingly charmed investors, which include Peter Thiel’s Founders Fund, Y Combinator, Nvidia, Amazon, and Meta. “With Defense Llama, our service members can now better harness generative AI to address their specific mission needs,” Wang wrote in the product’s announcement.

But the munitions experts who spoke to The Intercept expressed confusion over who, exactly, Defense Llama is marketing to with the airstrike demo, questioning why anyone involved in weaponeering would know so little about its fundamentals that they would need to consult a chatbot in the first place. “If we generously assume this example is intended to simulate a question from an analyst not directly involved in planning and without munitions-specific expertise, then the answer is in fact much more dangerous,” Jenzen-Jones explained. “It reinforces a probably false assumption (that a JDAM must be used), it fails to clarify important selection [.] Abridged due to character limit.

This may be the worst possible use for a chat bot. Jesus. If you want bad military advice, just head over to reddit where the bot was trained.

GriftCorp LLC. has gotta keep griftin'.

12ft.io got around the paywall for me, if anyone wants to read the whole thing

I just use the intercept's RSS feed and it's great

~~(https://archive.is/jtPFx)~~

Archive link as well. Edit: lol nvm it's also paywalled lmao.

response:

A Chinese man selling snake oil to the US military and SV oligarchs? But apparently China is using Tik-Tok to overthrow the US. /hj

None of the SV oligarch models are open source or can be classified as free programs. The training data required for these models to run is a black box, meaning that it is impossible to reproduce the results without it nor inspect how the program works. The training data is likely a treasure trove of grey area copyright violations and plagiarism (considering that LLMs don't actually correctly cite sources).

Truly the crustiest mfers around, its language model, the fuck it knows about military, aside from warthunder posts

This is so funny to me. A large part of the early development of computers was related to artillery range tables. We have come full circle except now the answers from the computer aren't even correct. Just completely shitting on the legacy of the people who started this whole mess.