this post was submitted on 05 Feb 2024

666 points (87.8% liked)

Memes

50979 readers

137 users here now

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

founded 6 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

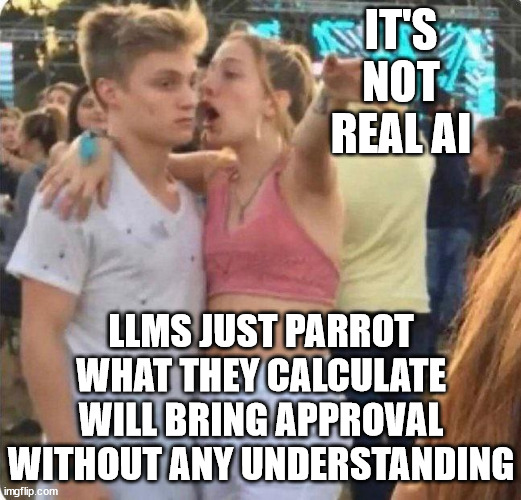

I don't think actual parroting is the problem. The problem is they don't understand a word outside of how it is organized. They can't be told to do simple logic because they don't have a simple understanding of each word in their vocabulary. They can only reorganize things to varying degrees.

https://en.m.wikipedia.org/wiki/Chinese_room

I think they're wrong, as it happens, but that's the argument.

I guess, I just am looking at from an end user vantage point. I'm not saying the model cant understand the words its using. I just don't think it currently understands that specific words refer to real life objects and there are laws of physics that apply to those specific objects and how they interact with each other.

Like saying there is a guy that exists and is a historical figure means that information is independently verified by physical objects that exist in the world.

In some ways, you are correct. It is coming though. The psychological/neurological word you are searching for is "conceptualization". The AI models lack the ability to abstract the text they know into the abstract ideas of the objects, at least in the same way humans do. Technically the ability to say "show me a chair" and it returns images of a chair, then following up with "show me things related to the last thing you showed me" and it shows couches, butts, tables, etc. is a conceptual abstraction of a sort. The issue comes when you ask "why are those things related to the first thing?" It is coming, but it will be a little while before it is able to describe the abstraction it just did, but it is capable of the first stage at least.

Some systems clearly do that though or are you just talking about llms?

Just llms

It doesn't need to understand the words to perform logic because the logic was already performed by humans who encoded their knowledge into words. It's not reasoning, but the reasoning was already done by humans. It's not perfect of course since it's still based on probability, but the fact that it can pull the correct sequence of words to exhibit logic is incredibly powerful. The main hard part of working with LLMs is that they break randomly, so harnessing their power will be a matter of programming in multiple levels of safe guards.