They're kind of right. LLMs are not general intelligence and there's not much evidence to suggest that LLMs will lead to general intelligence. A lot of the hype around AI is manufactured by VCs and companies that stand to make a lot of money off of the AI branding/hype.

Memes

Rules:

- Be civil and nice.

- Try not to excessively repost, as a rule of thumb, wait at least 2 months to do it if you have to.

Yeah this sounds about right. What was OP implying I’m a bit lost?

I believe they were implying that a lot of the people who say "it's not real AI it's just an LLM" are simply parroting what they've heard.

Which is a fair point, because AI has never meant "general AI", it's an umbrella term for a wide variety of intelligence like tasks as performed by computers.

Autocorrect on your phone is a type of AI, because it compares what words you type against a database of known words, compares what you typed to those words via a "typo distance", and adds new words to it's database when you overrule it so it doesn't make the same mistake.

It's like saying a motorcycle isn't a real vehicle because a real vehicle has two wings, a roof, and flies through the air filled with hundreds of people.

I believe OP is attempting to take on an army of straw men in the form of a poorly chosen meme template.

No people say this constantly it's not just a strawman

Depends on what you mean by general intelligence. I've seen a lot of people confuse Artificial General Intelligence and AI more broadly. Even something as simple as the K-nearest neighbor algorithm is artificial intelligence, as this is a much broader topic than AGI.

Wikipedia gives two definitions of AGI:

An artificial general intelligence (AGI) is a hypothetical type of intelligent agent which, if realized, could learn to accomplish any intellectual task that human beings or animals can perform. Alternatively, AGI has been defined as an autonomous system that surpasses human capabilities in the majority of economically valuable tasks.

If some task can be represented through text, an LLM can, in theory, be trained to perform it either through fine-tuning or few-shot learning. The question then is how general do LLMs have to be for one to consider them to be AGIs, and there's no hard metric for that question.

I can't pass the bar exam like GPT-4 did, and it also has a lot more general knowledge than me. Sure, it gets stuff wrong, but so do humans. We can interact with physical objects in ways that GPT-4 can't, but it is catching up. Plus Stephen Hawking couldn't move the same way that most people can either and we certainly wouldn't say that he didn't have general intelligence.

I'm rambling but I think you get the point. There's no clear threshold or way to calculate how "general" an AI has to be before we consider it an AGI, which is why some people argue that the best LLMs are already examples of general intelligence.

It depends a lot on how we perceive "intelligence". It's a lot more vague of a term than most, so people have very different views of it. Some people might have the idea of it meaning the response to stimuli & the output (language or art or any other form) being indistinguishable from humans. But many people may also agree that whales/dolphins have the same level of, or superior, "intelligence" to humans. The term is too vague to really prescribe with confidence, and more importantly people often use it to mean many completely different concepts ("intelligence" as a measurable/quantifiable property of either how quickly/efficiently a being can learn or use knowledge or more vaguely its "capacity to reason", "intelligence" as the idea of "consciousness" in general, "intelligence" to refer to amount of knowledge/experience one currently has or can memorize, etc.)

In computer science "artificial intelligence" has always simply referred to a program making decisions based on input. There was never any bar to reach for how "complex" it had to be to be considered AI. That's why minecraft zombies or shitty FPS bots are "AI", or a simple algorithm made to beat table games are "AI", even though clearly they're not all that smart and don't even "learn".

Knowing that LLMs are just "parroting" is one of the first steps to implementing them in safe, effective ways where they can actually provide value.

LLMs definitely provide value its just debatable whether they're real AI or not. I believe they're going to be shoved in a round hole regardless.

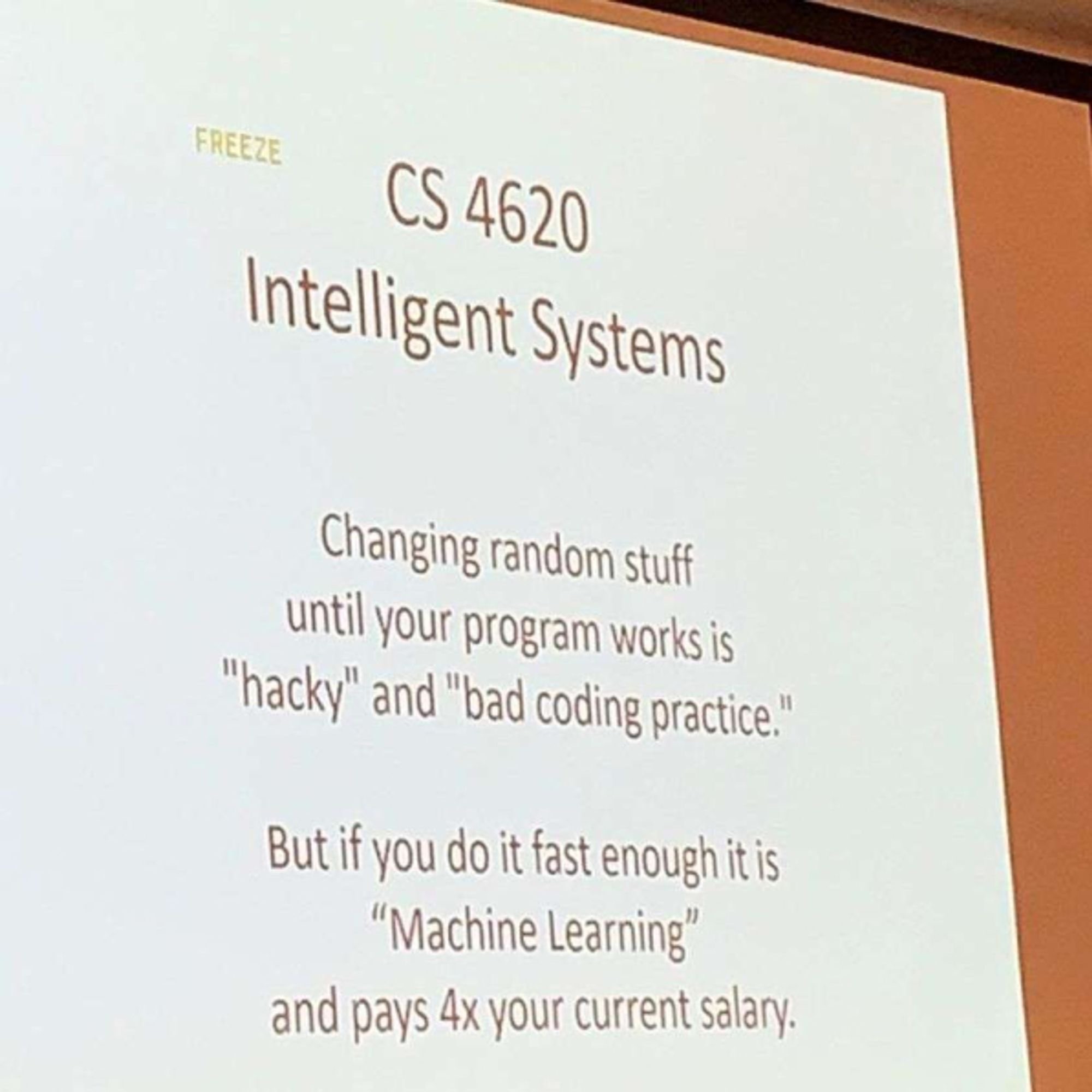

Reminds me of this meme I saw somewhere around here the other week

I think LLMs are neat, and Teslas are neat, and HHO generators are neat, and aliens are neat...

...but none of them live up to all of the claims made about them.

They're predicting the next word without any concept of right or wrong, there is no intelligence there. And it shows the second they start hallucinating.

They are a bit like you'd take just the creative writing center of a human brain. So they are like one part of a human mind without sentience or understanding or long term memory. Just the creative part, even though they are mediocre at being creative atm. But it's shocking because we kind of expected that to be the last part of human minds to be able to be replicated.

Put enough of these "parts" of a human mind together and you might get a proper sentient mind sooner than later.

Exactly. Im not saying its not impressive or even not useful, but one should understand the limitation. For example you can't reason with an llm in a sense that you could convince it of your reasoning. It will only respond how most people in the used dataset would have responded (obiously simplified)

I have a silly little model I made for creating Vogoon poetry. One of the models is fed from Shakespeare. The system works by predicting the next letter rather than the next word (and whitespace is just another letter as far as it's concerned). Here's one from the Shakespeare generation:

KING RICHARD II:

Exetery in thine eyes spoke of aid.

Burkey, good my lord, good morrow now: my mother's said

This is silly nonsense, of course, and for its purpose, that's fine. That being said, as far as I can tell, "Exetery" is not an English word. Not even one of those made-up English words that Shakespeare created all the time. It's certainly not in the training dataset. However, it does sound like it might be something Shakespeare pulled out of his ass and expected his audience to understand through context, and that's interesting.

I feel like our current "AIs" are like the Virtual Intelligences in Mass Effect. They can perform some tasks and hold a conversation, but they aren't actually "aware". We're still far off from a true AI like the Geth or EDI.

The way I've come to understand it is that LLMs are intelligent in the same way your subconscious is intelligent.

It works off of kneejerk "this feels right" logic, that's why images look like dreams, realistic until you examine further.

We all have a kneejerk responses to situations and questions, but the difference is we filter that through our conscious mind, to apply long-term thinking and our own choices into the mix.

LLMs just keep getting better at the "this feels right" stage, which is why completely novel or niche situations can still trip it up; because it hasn't developed enough "reflexes" for that problem yet.

LLMs are intelligent in the same way books are intelligent. What makes LLMs really cool is that instead of searching at the book or page granularity, it searches at the word granularity. It's not thinking, but all the thinking was done for it already by humans who encoded their intelligence into words. It's still incredibly powerful, at it's best it could make it so no task ever needs to be performed by a human twice which would have immense efficiency gains for anything information based.

EXACTLY. there is no problem solving either (except that to calculate the most probable text)

Even worse is some of my friends say that alexa is A.I.

If an LLM is just regurgitating information in a learned pattern and therefore it isn't real intelligence, I have really bad news for ~80% of people.

I know a few people who would fit that definition

Like almost all politicians?

LLMs are a step towards AI in the same sense that a big ladder is a step towards the moon.

Been destroyed for this opinion here. Not many practicioners here just laymen and mostly techbros in this field.. But maybe I haven't found the right node?

I'm into local diffusion models and open source llms only, not into the megacorp stuff

If anything people really need to start experimenting beyond talking to it like its human or in a few years we will end up with a huge ai-illiterate population.

I’ve had someone fight me stubbornly talking about local llms as “a overhyped downloadable chatbot app” and saying the people on fossai are just a bunch of ai worshipping fools.

I was like tell me you now absolutely nothing you are talking about by pretending to know everything.

Keep seething, OpenAI's LLMs will never achieve AGI that will replace people

As someone who has loves Asimov and read nearly all of his work.

I absolutely bloody hate calling LLM's AI, without a doubt they are neat. But they are absolutely nothing in the ballpark of AI, and that's okay! They weren't trying to make a synethic brain, it's just the culture narrative I am most annoyed at.

I fully back your sentiment OP; you understand as much about the world as any LLM out there and don't let anyone suggest otherwise.

Signed, a "contrarian".

Ok, but so do most humans? So few people actually have true understanding in topics. They parrot the parroting that they have been told throughout their lives. This only gets worse as you move into more technical topics. Ask someone why it is cold in winter and you will be lucky if they say it is because the days are shorter than in summer. That is the most rudimentary "correct" way to answer that question and it is still an incorrect parroting of something they have been told.

Ask yourself, what do you actually understand? How many topics could you be asked "why?" on repeatedly and actually be able to answer more than 4 or 5 times. I know I have a few. I also know what I am not able to do that with.

I don't think actual parroting is the problem. The problem is they don't understand a word outside of how it is organized. They can't be told to do simple logic because they don't have a simple understanding of each word in their vocabulary. They can only reorganize things to varying degrees.

https://en.m.wikipedia.org/wiki/Chinese_room

I think they're wrong, as it happens, but that's the argument.

I feel that knowing what you don't know is the key here.

An LLM doesn't know what it doesn't know, and that's where what it spouts can be dangerous.

Of course there's a lot of actual people that applies to as well. And sadly they're often in positions of power.