Seeing a worrying trend of people wanting to cosplay as La Resistance, going dark, hiding from the cops. "Fun" fact, the Gestapo was extremely good at finding, torturing and killing people in the resistance. If people only know you via encrypted messaging, who is gonna raise a ruckus when you're sent to a camp?

TechTakes

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

I know this shouldn't be surprising, but I still cannot believe people really bounce questions off LLMs like they're talking to a real person. https://ai.stackexchange.com/questions/47183/are-llms-unlikely-to-be-useful-to-generate-any-scientific-discovery

I have just read this paper: Ziwei Xu, Sanjay Jain, Mohan Kankanhalli, "Hallucination is Inevitable: An Innate Limitation of Large Language Models", submitted on 22 Jan 2024.

It says there is a ground truth ideal function that gives every possible true output/fact to any given input/question, and no matter how you train your model, there is always space for misapproximations coming from missing data to formulate, and the more complex the data, the larger the space for the model to hallucinate.

Then he immediately follows up with:

Then I started to discuss with o1. [ . . . ] It says yes.

Then I asked o1 [ . . . ], to which o1 says yes [ . . . ]. Then it says [ . . . ].

Then I asked o1 [ . . . ], to which it says yes too.

I'm not a teacher but I feel like my brain would explode if a student asked me to answer a question they arrived at after an LLM misled them on like 10 of their previous questions.

I’m not a teacher but I feel like my brain would explode if a student asked me to answer a question they arrived at after an LLM misled them on like 10 of their previous questions.

When I was a lab demonstrator in university, one of the lab exercises was somewhat hard to get right, and our team noticed a huge raft of plagiarism. Not only were multiple students using the exact same code, but it was code that didn’t even work! The course had a policy of instant failure with evidence of plagiarism, which was made clear at the start of the course, and yet this just kept happening. To top it all off, the lab exercises didn’t change from year to year, so the same code kept showing up! This plus a number of other incidents broke me and made me realise I didn’t have it in me to be this kind of educator.

It would be great if this was all a big psyop by big tech to gaslight educators into ending their careers for some kind of mass brainwashing conspiracy, because at least there would be some intention behind it. Instead, we’re poisoning collective consciousness with cyberslop because we think one day the virtual dumbass will reach enlightenment. We don’t actually have a way for that to happen, so we’re just going to metaphorically bash our own heads against rocks in hopes we glitch ourselves into the singularity.

So yeah in short my head would explode too.

Questions To Which the Answer is No, an ongoing series:

Generative AI - Can it actually help with the day-to-day technical work of a practising engineer?

The upcoming Autumn Panel Discussion hosted by the UCD Engineering Graduates Association (EGA) will take place on Tuesday, November 19, 2024, at ESB's Headquarters. This event will explore several pressing topics at the intersection of engineering and artificial intelligence. Attendees will gain insights into current trends in AI, particularly the latest advancements in generative AI tools and platforms. The discussion will feature case studies that highlight the successful integration of AI into real-world engineering processes, offering practical examples of its applications. Additionally, the panel will address the essential skills that engineers need to effectively incorporate AI into their workflows, providing guidance on upskilling for the future. The event will conclude with a Q&A session, allowing participants to engage directly with the panelists and delve deeper into the ethical considerations surrounding AI in engineering.

No, not software engineers, engineer engineers. Yikes!

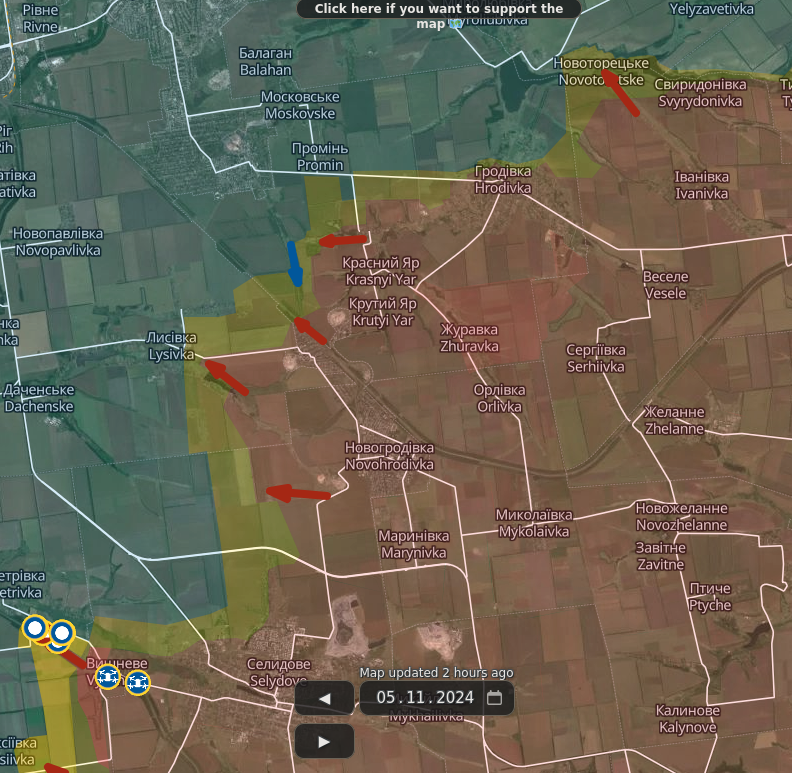

I swear to god post the map with lots of diagonal arrows one more time

hackernews having a normal one (EDIT: Then things got worse)

HN Title: The evolution of nepotism in academia, 1088-1800

In the top comments:

dash2 55 minutes ago | next [–]

Here's an interesting extract:

We find evidence of nepotism for 5–6.6% of scholars’ sons in Protestant and for 29.4% in Catholic universities and academies. Catholic institutions relied more heavily on intra-family human capital transfers. We show that these differences partly explain the divergent path of Catholic and Protestant universities after the Reformation.

This relates to an important paper providing evidence that indeed Protestantism was associated with scientific progress: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=4389708

red016 3 minutes ago | parent | next [–]

i’d be more interested in jewish numbers than protestant or catholic

Ah yes, the famous European Jewish run universities. You don't have to be an idiot to be anti-Semitic, but it certainly helps.

Audibly rolling my eyes

today's a fucking trip and a half

in a mall I saw some Honor laptop[0] ads with "AI" pitch sauce slathered all over it

later walking between art galleries I saw a roadsign ad for a property sales company promising "more effective strategies using AI" (more effective strategies for.. selling.. houses..? I guess..? (x up for doubt))

and now I get this shit in my mailbox from someone who absolutely purchased a dataset with this address in it:

From: Ai Everything GLOBAL <newsletter@event.aieverythingglobal.com>

Subject: Join the AI Elite—G42, TII, DIEZ, Dell & More!

[0] - I didn't even know they were in ZA, but nope, 2-floor hanging banners and ads allllll over elevator doors and shit

Recently read Brian Merchant's latest piece on the upcoming election, and I felt like making a quick-and-dirty prediction:

If Harris wins, I expect there will be some pretty harsh regulation against Silicon Valley. Putting aside everything but simple political pragmatism:

-

Elon Musk's election antics and Trump's support from tech billoinaires have shown SV holds a significant amount of power over politics - power which will almost certainly prove a constant thorn in Harris' side. As such, it'd be in her self interest to kneecap the Valley ASAP.

-

Public opinion of Silicon Valley has taken a pounding over the years for a variety of reasons, with the AI slop-nami just the latest and most serious grievance the public has against them. Any tech regulation a Harris presidency makes (especially against AI) is gonna enjoy significant public support from day one.

Fuck it, off-the-cuff prediction: I expect resistance to Silicon Valley is gonna turn violent during Trump's term.

Personal rant: in my ongoing search for a replacement for ProtonMail after they pivoted to AI had me almost sign up with Tuta because, hey, they looked good and were on my radar originally anyway, when I found out that they do not offer any IMAP/SMTP access at all.

I mean, I get it, their whole thing is privacy and, yes, storing mail locally on my machine kinda undermines the idea of strong and impenetrable E2E encryption, but I should at least have the choice like I do with Proton Bridge. Because without SMTP Tuta is completely unusable for git send-email. I mean, yes, technically I could copy-paste the output of format-patch into the web client but, first, I am lazy and don't wanna do that, and second, from my experience it rarely works anyway because the clients do some encoding crap so that git am doesn't eat it without cleanup.

Meh. I guess I have to keep looking.